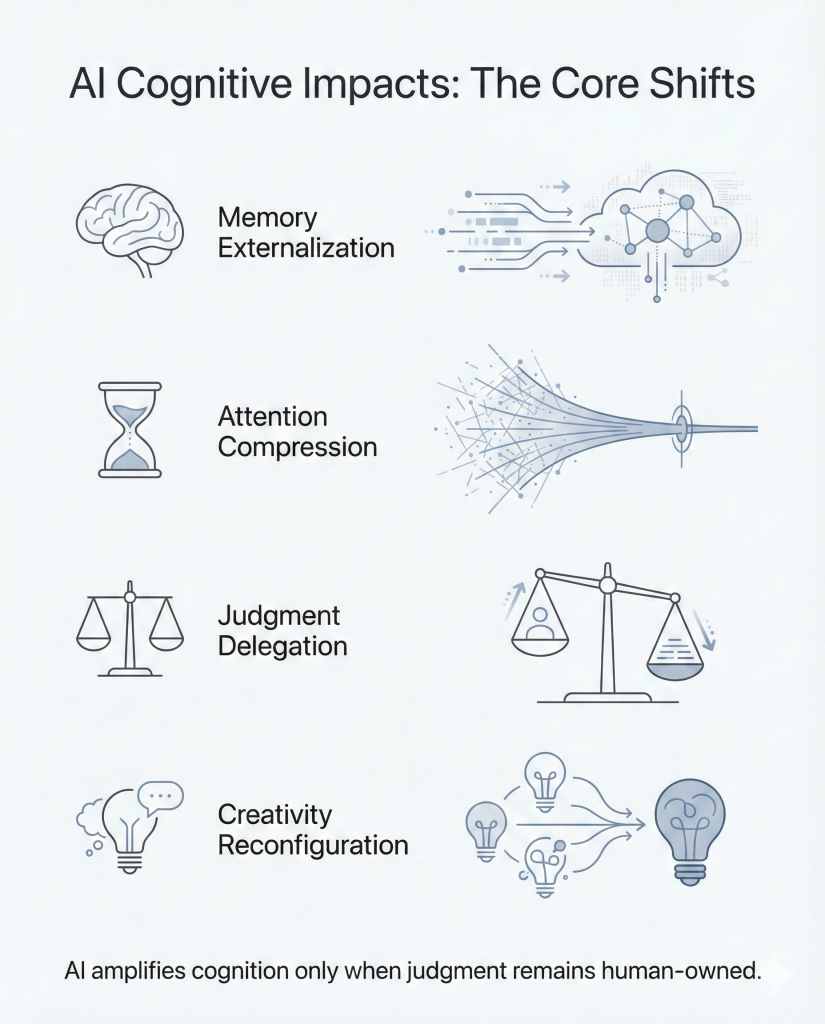

The most important impact of AI may not be what it automates, but what it changes in the human mind. As people increasingly rely on AI to explain, summarize, compare, suggest, and decide, the deeper shift is cognitive: memory is being externalized, attention compressed, judgment delegated, creativity reconfigured, beliefs subtly shaped, and mental effort reduced.

Over time, these changes do not just affect productivity. They influence how people think, learn, evaluate, and form trust. This is why the real question is no longer whether AI is useful. It is how repeated AI use is reshaping human cognition — and what that means for judgment, autonomy, and the future quality of thought.

Taken together, these shifts do not simply change how people use tools. They change what becomes cognitively normal.

Why AI Changes More Than Output

AI is often discussed in terms of productivity, automation, and efficiency. But its deeper impact is cognitive. It does not only change how quickly people complete tasks. It changes how they remember, attend, evaluate, create, and decide.

This is what makes AI different from most earlier tools. A calculator speeds up calculation. A search engine improves retrieval. But AI increasingly participates in the intermediate stages of thinking itself: summarizing complexity, proposing language, narrowing options, and shaping first interpretations.

That shift matters because cognition is not only about getting to an answer. It is also about how the mind arrives there. When AI becomes a regular partner in explanation, comparison, drafting, and recommendation, it can gradually influence what people notice, what they ignore, what they question, and what they accept too quickly.

The issue, then, is not whether AI affects thinking. It already does. The more important question is which kinds of cognitive change it is accelerating, and whether those changes strengthen human judgment or quietly weaken it.

The 6 Cognitive Shifts AI Is Accelerating

1. Memory Externalization

AI makes it easier to offload recall. Instead of storing information internally, people increasingly rely on external systems to retrieve, summarize, and reassemble what they need on demand.

This can be useful. It reduces cognitive load, speeds up access, and frees mental space for higher-order work. But it also changes the relationship between knowing and finding. When recall becomes consistently outsourced, internal knowledge can weaken, especially when people stop rehearsing, organizing, and connecting information for themselves.

The risk is not simply forgetting facts. It is losing the cognitive structure that comes from holding ideas in the mind long enough to compare them, challenge them, and build new insight from them.

2. Attention Compression

AI rewards speed. It shortens the path between question and answer, reduces friction, and makes fast closure feel normal.

That convenience has a cognitive cost. As people grow used to immediate synthesis, tolerance for ambiguity, delay, and sustained mental effort can decline. The mind begins to prefer compressed interpretation over extended engagement.

Attention compression does not only reduce focus time. It can also narrow curiosity. When answers arrive too quickly, people may stop exploring competing perspectives, following uncertainty, or staying with complex ideas long enough for deeper understanding to emerge.

3. Judgment Delegation

One of AI’s most significant cognitive effects is that it does not merely provide information. It increasingly suggests what to prioritize, what to say, what to choose, and what to believe is most likely correct.

This makes judgment easier to outsource.

That matters because judgment is not the same as selection. It involves weighing context, interpreting consequence, and deciding what matters under uncertainty. When AI becomes a default recommender, people may retain nominal control while quietly surrendering evaluative responsibility.

This is also where this topic connects directly to the AI Decision Boundary Framework 2026. The question is not only whether AI can assist thinking, but when assistance begins to turn into authority.

4. Creativity Reconfiguration

AI changes creative work by making generation easier, variation faster, and first drafts almost immediate.

This does not eliminate creativity, but it changes where creativity happens. Instead of beginning from a blank page, people increasingly begin from options, suggestions, and generated patterns. In some cases, that can expand experimentation. In others, it can narrow originality by making familiar structures feel like natural starting points.

The deeper shift is that creativity becomes less about raw generation and more about direction, taste, selection, and editorial judgment. That can be productive, but it also risks reducing the role of struggle, discovery, and unexpected formulation in the creative process.

5. Epistemic Drift

AI can subtly shape not only what people know, but how they form confidence in what they know.

When fluent answers arrive quickly and coherently, they can create a false sense of understanding. People may feel informed without having tested the underlying assumptions, checked competing interpretations, or examined the quality of the source material.

This is epistemic drift: a gradual shift in how certainty is formed. Confidence begins to attach to fluency, coherence, and convenience rather than verification, depth, or intellectual struggle.

Over time, this can make belief formation more passive. People do not necessarily become less informed in a visible way. They become less rigorous in how they decide what deserves trust.

6. Cognitive Debt

Cognitive debt accumulates when short-term convenience repeatedly substitutes for long-term mental effort.

Like technical debt, it does not always create immediate failure. In fact, the system may seem more efficient for a long time. But repeated offloading of recall, evaluation, synthesis, and careful reasoning can weaken the very capacities people later need in higher-stakes situations.

The problem is not that AI helps. The problem is that consistent over-reliance can leave people less practiced in the forms of thinking they assume they still possess.

This is one of the clearest distinctions between AI-assisted thinking and AI-dependent thinking. Assistance extends cognition. Dependence can erode it.

AI-Assisted Thinking vs AI-Dependent Thinking

Not all AI use weakens cognition. In many cases, AI can extend it.

The difference lies in whether AI is being used to support thinking or to replace the effort that thinking requires.

AI-assisted thinking strengthens human cognition when the system helps a person clarify, explore, compare, draft, or test ideas while the user remains actively engaged in evaluation and judgment. In this mode, AI acts as a cognitive amplifier. It increases range, speed, and iteration without removing intellectual ownership.

AI-dependent thinking emerges when the system becomes the default source of interpretation, wording, prioritization, and confidence. In this mode, the user still appears involved, but much of the real cognitive work has already been outsourced. The result is not just convenience. It is gradual skill atrophy.

The distinction matters because the same tool can produce opposite outcomes depending on how it is used.

AI-assisted thinking tends to:

-

expand exploration

-

accelerate iteration

-

reduce mechanical friction

-

preserve judgment

-

strengthen output without hollowing out ownership

AI-dependent thinking tends to:

-

narrow curiosity

-

reward passive acceptance

-

reduce evaluative effort

-

weaken recall and synthesis

-

increase confidence without increasing understanding

A practical test is simple: after using AI, is the person thinking more clearly, or merely arriving faster?

If the system helps someone reason better, challenge assumptions, and make stronger decisions, it is assisting cognition. If it encourages fast closure, borrowed certainty, and reduced mental effort, it is creating dependency.

The long-term issue is not whether AI saves time. It is whether repeated use leaves people cognitively stronger or cognitively softer.

This distinction also aligns with broader international AI governance thinking. The OECD AI Principles promote AI that is trustworthy and respects human rights and democratic values, which reinforces the case for using AI to extend human capability without displacing accountability.

When AI Strengthens Cognition — and When It Weakens It

AI does not have a single cognitive effect. Its impact depends on the kind of task, the level of user engagement, and whether the system is being used to extend judgment or bypass it.

AI tends to strengthen cognition when it helps people do better thinking, not less thinking.

That usually happens when AI is used to:

-

surface options the user still evaluates

-

summarize material the user still checks

-

accelerate drafting while preserving editorial judgment

-

clarify complexity without removing critical interpretation

-

support learning without replacing effortful understanding

In these cases, AI reduces friction but does not remove intellectual responsibility. The user remains mentally present. The system expands capacity without becoming a substitute for discernment.

AI tends to weaken cognition when it becomes the default source of closure.

That usually happens when AI is used to:

-

provide answers that are accepted too quickly

-

replace recall with constant retrieval

-

replace synthesis with fluent compression

-

replace judgment with recommendation

-

reduce the felt need for ambiguity, patience, and reflection

In these cases, the problem is not visible failure. It is gradual dependence. People may still perform well in the short term while becoming less practiced in the deeper forms of thinking they will later need.

The difference often comes down to one question: Is AI helping the mind work harder on better problems, or helping it avoid the effort that good thinking requires?

That is the real boundary.

What Leaders, Educators, and Knowledge Workers Should Watch

The cognitive effects of AI are not only personal. They are institutional. Over time, they can change how teams reason, how students learn, how professionals exercise judgment, and how organizations define competence.

Leaders should watch for signs that AI is increasing output while weakening ownership. A team that produces more content, more summaries, or more recommendations is not necessarily thinking better. If fewer people can explain the reasoning behind the work, defend the choices made, or challenge the system’s assumptions, cognitive dependency may already be forming.

Educators should watch for the difference between assisted understanding and outsourced effort. AI can support learning when it helps students compare ideas, test interpretations, and receive feedback. It weakens learning when it becomes a shortcut around struggle, memory formation, and genuine comprehension.

Knowledge workers should watch for subtle changes in their own habits. These include reduced patience for complexity, lower tolerance for ambiguity, weaker recall, faster trust in fluent output, and a growing tendency to ask the system before thinking independently.

The practical issue is not whether AI belongs in modern work. It does. The issue is whether people and institutions are using it in ways that preserve judgment, build capability, and keep intellectual responsibility in human hands.

This concern is also reflected in UNESCO’s guidance on generative AI in education and research, which argues for a human-centred approach and age-appropriate, human-agent use rather than uncritical dependence on AI systems.

FAQs

What are AI cognitive impacts?

AI cognitive impacts are the changes in human memory, attention, judgment, reasoning, and creativity that result from repeated interaction with AI systems. These impacts affect how people think, not just what tasks they perform.

Does AI make people less intelligent?

AI does not inherently reduce intelligence, but it can weaken independent thinking if users outsource judgment and effort.

What is cognitive debt?

Cognitive debt is the long-term cost of repeated mental offloading to AI, leading to reduced independent reasoning and shallow synthesis.

How can humans use AI without losing thinking skills?

By thinking before prompting, verifying outputs, preserving effort, and retaining accountability for decisions.