Executive Overview

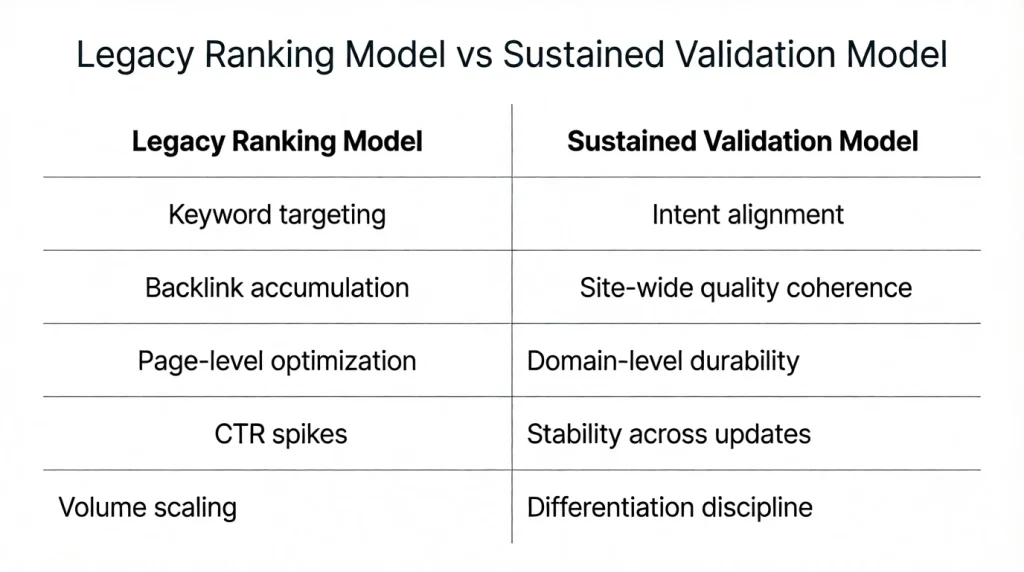

Over the past several years — particularly since the rollout of the Helpful Content system in 2022 — Google’s Core Updates have not replaced traditional ranking systems.

They have recalibrated them.

The structural change is not that ranking no longer matters.

It is that ranking alone no longer sustains durable visibility.

Organic distribution today operates across:

- Core search results

- Google Discover

- AI-generated Overviews

- Related content surfaces

These systems still rely on relevance, technical quality, and authority signals. Increasingly, however, durability depends on whether content continues to meet user expectations over time.

This pillar introduces the Sustained Validation Model — a framework for understanding this shift:

Exposure creates opportunity.

Sustained validation preserves distribution.

I. What Google Officially Confirms (No Inference)

Before building a thesis, we isolate confirmed statements.

1. Helpful Content Is Site-Wide

Google states that the Helpful Content system generates signals that can be applied across content on a site.

This confirms:

- Evaluation is not purely page-isolated.

- Site-level quality matters.

However, Google also clarifies that this system operates alongside other ranking systems — not as a replacement.

2. Discover Is Interest-Driven, Not Query-Driven

“Discover makes it possible to find content without searching.”

Discover surfaces content based on user interests inferred over time.

Google does not publicly confirm specific engagement metrics used in ranking decisions. It does emphasize content quality, freshness, and alignment with user interests.

3. Quality Rater Guidelines Emphasize Experience & Trust

Google’s Search Quality Rater Guidelines highlight:

- Experience

- Expertise

- Authoritativeness

- Trustworthiness

These are not direct ranking factors, but they guide evaluation systems.

Why This Matters

These documents confirm three realities:

- Quality evaluation can apply site-wide.

- Discover distributes without explicit search queries.

- Trust and experience are emphasized in evaluation frameworks.

What they do not confirm:

- Exact behavioral thresholds

- Direct use of dwell time as a ranking factor

- Precise feedback loops between Discover and core ranking

Any model must respect that boundary.

II. Defining Sustained Validation (Operational, Without Speculation)

To avoid rhetorical abstraction:

Sustained Validation = Repeated alignment between user intent (or interest) and delivered content value over time.

This does not require assuming hidden engagement metrics.

It can be observed through:

- Content remaining eligible for Discover

- Stable search visibility across updates

- Continued surface inclusion in AI summaries

- Absence of post-update collapse

Validation is therefore observable through durability — not assumed through internal metrics.

III. The Structural Shift: From Stability to Ongoing Evaluation

Historically, ranking followed this logic:

Relevance + Authority + Technical Compliance → Stable Position

Core updates introduced more volatility. Sites once stable experienced significant shifts after broad quality reassessments.

Documented industry volatility patterns (for example, post-Helpful Content and subsequent broad core updates) show:

- Sites with heavy template-driven content often saw broad declines.

- Sites emphasizing original reporting and domain expertise often recovered.

(See: Search Engine Roundtable coverage of multiple core updates)

While anecdotal industry observation is not official confirmation, volatility patterns consistently align with quality-based recalibration.

IV. The Sustained Validation Model (Refined & Bounded)

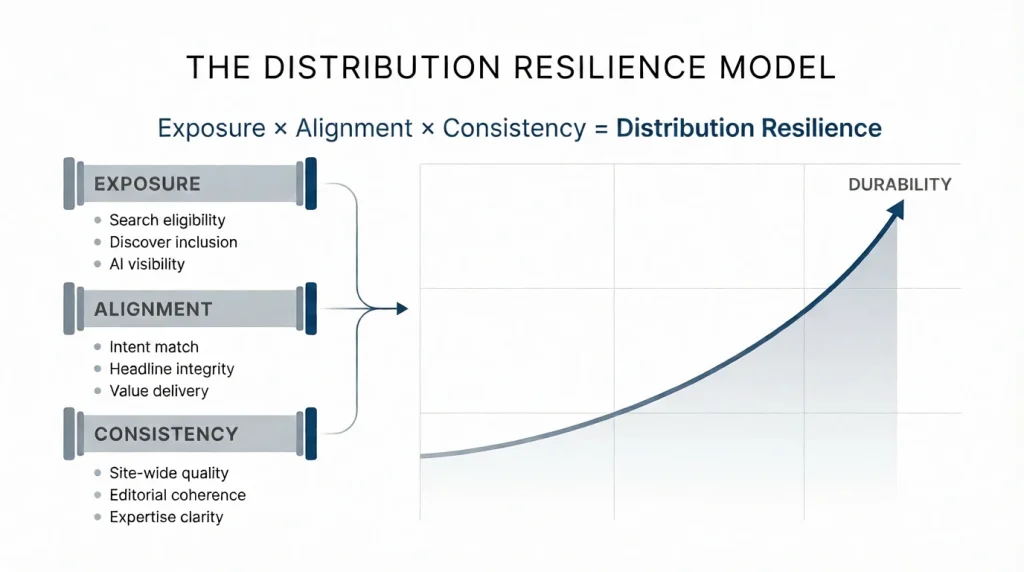

We define:

Distribution Resilience = Exposure × Alignment × Consistency

Where:

Exposure = Eligibility across surfaces

Alignment = Content meets user expectation

Consistency = Quality standards maintained site-wide

This model does not claim Google uses this formula.

It describes observable durability patterns across updates.

V. Where AI Changes the Equation (Without Overreach)

AI does not inherently violate Google policy.

Google explicitly states that AI-generated content is acceptable if it is helpful and created for users.

The issue is not AI itself.

The issue is interchangeable content.

When large volumes of similar, structurally correct content are produced:

- Differentiation declines.

- Distinctive perspective weakens.

- Editorial accountability becomes less visible.

In that environment, content that demonstrates clear expertise and contextual judgment stands out.

AI accelerates supply.

Evaluation systems must differentiate quality.

VI. Discover and Search: Related but Distinct

It would be overreach to claim Discover directly determines search ranking.

However:

- Discover operates as a rapid distribution surface.

- It surfaces content based on interest signals.

- Poor performance in Discover can reduce future Discover visibility.

Whether Discover performance directly influences core search is undocumented.

What is defensible:

Discover exposes content to behavior-driven sampling at scale.

Search ranking durability still depends on traditional factors — but must coexist with broader quality signals.

VII. Governance Implications (Evidence-Based)

Because evaluation can be site-wide, leadership should consider:

- Reducing low-value, template-based content.

- Auditing for editorial consistency.

- Aligning headlines precisely with content delivery.

- Monitoring visibility stability across updates, not just traffic spikes.

A practical governance recommendation:

Conduct post-update audits focusing on:

- Sections disproportionately affected

- Content clusters with repetitive structure

- Pages lacking demonstrable expertise markers

These are observable, measurable interventions.

VIII. What This Does Not Mean

To remain precise:

- Backlinks remain a ranking factor.

- Technical SEO remains foundational.

- Keyword relevance remains necessary.

- Google does not confirm direct behavioral ranking signals.

The shift is not elimination.

It is rebalancing.

IX. The Sustained Validation Model: Core Thesis

Organic distribution today requires more than ranking.

It requires durability.

Durability depends on:

- Maintaining site-wide quality

- Demonstrating expertise clearly

- Avoiding interchangeable content

- Aligning content with real user expectations

This is not about gaming engagement metrics.

It is about building systems that consistently meet user intent — across surfaces — over time.

Distribution Resilience Scorecard

Rate each statement from 0 (Not at all true) to 5 (Strongly true).

Appendix A: Empirical Volatility Patterns Across Recent Core Updates

This appendix does not attempt to reverse-engineer Google’s algorithm.

Instead, it examines observable industry volatility patterns following major core updates and aligns them with documented guidance from Google.

A.1 Core Update Volatility Is Structural, Not Tactical

Google describes core updates as broad changes to search systems designed to improve overall quality and relevance.

Core updates are not targeted penalties. They are re-evaluations.

Empirical pattern observed across multiple updates (2022–2024):

- Significant ranking shifts across entire domains

- Quality reassessment rather than isolated page demotions

- Delayed recovery even after tactical on-page changes

Industry tracking (e.g., SEMrush Sensor, MozCast, SISTRIX visibility index) consistently shows widespread volatility during these periods.

Example sources:

These tools do not explain causation, but they document volatility intensity.

The takeaway:

Core updates re-evaluate site-wide quality signals, not isolated keywords.

This supports the durability framing — stability now depends on broader quality coherence.

A.2 Helpful Content Update & Template-Heavy Sites

Following the initial Helpful Content rollout (August 2022) and subsequent integration into core updates:

Industry case studies documented:

- Declines in sites heavily reliant on scaled, template-driven content

- Recoveries in sites emphasizing domain expertise and editorial authority

Example coverage:

Search Engine Roundtable – Helpful Content Impact Analysis

While anecdotal, these documented patterns consistently show:

- Volume without differentiation correlates with higher volatility

- Editorially distinctive content demonstrates stronger recovery patterns

This does not prove Google measures “dwell time.”

It demonstrates that quality-based reassessment affects entire domains.

A.3 Discover Eligibility & Visibility Stability

Google Discover documentation emphasizes:

- Content must align with user interests

- Misleading or exaggerated headlines can reduce eligibility

- Consistent quality is required

Observed industry pattern:

Sites relying on headline inflation often see unstable Discover traffic spikes followed by visibility contraction.

Discover traffic is characteristically volatile. However, publishers with:

- Clear editorial voice

- Reliable topical authority

- Consistent delivery alignment

tend to show more stable Discover inclusion over time.

Important:

There is no public confirmation that Discover performance directly influences core search ranking.

The observable pattern is limited to Discover distribution durability.

A.4 AI-Generated Content & Update Sensitivity

Google’s position:

AI-generated content is acceptable if helpful and created for users.

Post-2023 core updates showed:

- Heavy volatility among sites rapidly scaled with low-differentiation AI content

- Relative resilience among brands combining AI drafting with editorial oversight

Industry observation sources:

Again:

This is pattern observation, not algorithm disclosure.

But volatility disproportionately impacted scaled, low-differentiation content clusters.

A.5 Stability vs Spike Pattern

Across multiple updates, a recurring pattern emerges:

Sites optimized for:

- Sustainable topical authority

- Editorial consistency

- Depth over volume

tend to show:

- Lower amplitude visibility swings

- Faster recovery after updates

Sites optimized for:

- High-volume publishing

- Thin keyword capture

- Headline-driven traffic extraction

tend to show:

- Higher volatility amplitude

- Slower recovery cycles

This pattern aligns with Google’s publicly stated emphasis on:

- People-first content

- Site-level quality

- Avoidance of content created primarily for search engines

A.6 What This Appendix Does Not Claim

To remain precise:

- It does not claim Google uses dwell time as a ranking factor.

- It does not claim Discover directly influences core search ranking.

- It does not claim backlinks are irrelevant.

- It does not claim AI content is penalized categorically.

It demonstrates:

Volatility patterns consistently align with quality reassessment and domain-level evaluation — not tactical keyword changes.

A.7 Empirical Reinforcement of the Core Thesis

The pillar thesis states:

Distribution durability depends on sustained quality coherence across surfaces.

Empirical update volatility supports:

- Site-wide reassessment

- Quality-driven recalibration

- Greater sensitivity to interchangeable content

What changes is not the existence of ranking signals.

What changes is the tolerance for fragility.

Visibility can be gained tactically.

Durability requires systemic quality.

The sustained validation model becomes more important in the age of AI search attribution, because some agentic systems may use business signals without producing measurable visibility data.