Artificial intelligence has become part of how young people read, write, study, solve problems, and make sense of the world around them. That much is obvious. The harder question – the one that matters more over the long term is whether AI is strengthening their minds or quietly weakening the habits that real learning depends on.

That is the more serious issue.

Most of the public debate about AI and young people is stuck in the wrong place. It circles around cheating, shortcuts, and whether students are using AI “too much.” Those questions are real, but they are not the deepest ones. The more important question is what repeated AI assistance is doing to the formation of judgment itself.

A 2026 narrative review in the journal AI by Giansanti and Cosenza, titled “Artificial Intelligence and Youth: Cognitive, Educational, and Behavioral Impacts,” maps this tension carefully. AI can improve efficiency, support content creation, increase engagement, and personalize learning. At the same time, excessive reliance may contribute to cognitive offloading, dependency, inflated self-confidence, reduced independent problem-solving, and weaker critical oversight. The authors are careful – they frame the field as emerging and openly acknowledge the limits of the evidence. But the pattern they describe is serious enough to deserve close attention.

That pattern is not simply about screen time. It is about what happens when a generation becomes highly fluent in assistance before it becomes equally fluent in verification, reflection, and intellectual resistance.

The wrong debate: this is not mainly about cheating

Much of the reaction to AI in education has been moral and procedural. Are students using it to write essays? Is it making assignments meaningless? Are teachers losing control?

Those are surface concerns. The deeper issue is developmental.

Education is not only about producing acceptable outputs. It is also about building the internal machinery that makes strong outputs possible in the first place: attention, memory, frustration tolerance, inference, self-correction, and the capacity to work through uncertainty without immediate rescue.

When AI becomes the default first move, it can change that developmental pathway.

The review highlights that AI and generative AI can be genuinely beneficial in learning contexts – particularly through personalized support, efficiency, and engagement. But it also notes that frequent reliance may reduce deep engagement with learning materials, interfere with reading comprehension and vocabulary acquisition in some contexts, and encourage students to bypass effortful cognitive work. That matters because effort is not a side effect of learning. In many domains, it is part of the mechanism of learning.

This is why the real danger is not merely that a student submits AI-assisted work. It is that the student may gradually lose contact with the internal struggle through which knowledge becomes capability.

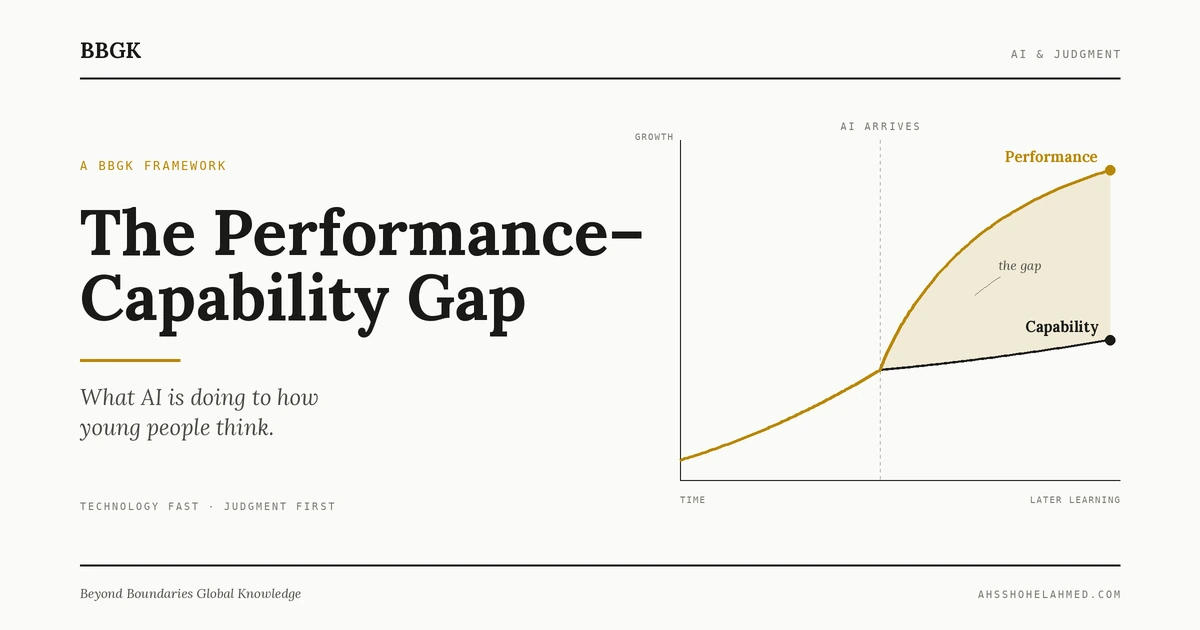

The Performance Capability Gap

The central BBGK framing here is what can be called the Performance Capability Gap:

The widening distance between what a young person can produce with AI assistance and what they can reliably do on their own.

Performance is what the work looks like when it leaves the student’s desk: the polished essay, the organized slide deck, the clean code, the fluent summary. Capability is what remains when the tool is taken away: the reasoning, the memory, the patience, the judgment, the ability to think through a problem that no one has pre-structured.

In the pre-AI classroom, these two moved together. A student who could write a strong essay had, almost by definition, practiced the reasoning that produced it. A student who could solve the problem had, almost by definition, built the cognitive structure underneath the solution.

AI breaks that coupling.

A student can now produce a strong-looking essay without having practiced the reasoning. A student can now arrive at a clean answer without having built the structure that produces clean answers. The performance rises. The capability does not always rise with it. In some cases, it drops.

That gap is the single most important thing adults should be watching in schools, at home, and in policy. It is what the research keeps pointing to from different angles. The Giansanti and Cosenza review calls out inflated self-efficacy, cognitive offloading, and the illusion of explanatory depth different names for the same underlying pattern: students overestimate what they understand because the tool has done much of the work for them.

This is the same cognitive dynamic I’ve described in a different context in Knowledge Distance AI extends fluency across adjacent domains faster than it builds the underlying expertise that would sustain that fluency without the tool. In youth, the same pattern shows up one level earlier, at the formation of the thinking apparatus itself.

Performance gains can hide capability loss

One reason AI is so persuasive is that it often works.

It helps students produce cleaner writing, organize ideas faster, summarize information quickly, and complete tasks with less friction. In the short term, these benefits are real. The review repeatedly acknowledges that AI can enhance learning efficiency, creativity, engagement, and professional productivity.

But performance is not the same as capability.

A polished answer can conceal shallow understanding. A fast result can mask dependency. A student may feel more competent because the work looks better, even when much of the underlying reasoning was borrowed rather than built.

That distinction matters far beyond the classroom.

In the AI era, visible fluency will be cheap. Many people will be able to produce competent-looking outputs. The scarcer advantage will be the ability to test, challenge, refine, reject, or responsibly override those outputs the same evaluative work I’ve argued elsewhere is becoming the decisive human contribution as AI produces more persuasive-sounding advice. If education systems reward speed and surface polish without protecting deeper cognitive formation, they may end up producing students who look more capable than they really are.

That is not a minor educational issue. It is a long-term human capital issue.

The dependency paradox

The most counter-intuitive idea in the review is what the authors call the dependency paradox.

The common assumption is simple: more AI literacy should mean healthier AI use. If students understand the tool better, they should become less vulnerable to misuse. But the research presents a more complicated picture. Higher levels of AI literacy and trust may sometimes increase reliance rather than reduce it. Greater familiarity with AI can make people more likely to delegate cognitive work to it, especially when trust rises faster than critical oversight.

This is a crucial insight.

It means the solution is not just “teach students how to use AI.” That is necessary but incomplete. A student can become highly skilled at prompting, navigating outputs, and integrating AI into workflows while still becoming less independent in how they reason.

Tool fluency is not judgment.

Judgment does not emerge automatically from more use. In some cases, more use may actually erode the very habits that judgment requires: skepticism, patience, verification, intellectual humility, and the willingness to sit with partial understanding until it becomes real understanding.

Many “AI readiness” conversations in education and workforce policy remain too shallow on this point. They focus on adoption and competence without enough attention to dependency dynamics. They assume capability expands cleanly with access. The evidence suggests the relationship is more unstable than that.

Why young people are not just smaller adults in this equation

This matters especially for young people because they are not encountering AI after their cognitive habits are fully formed. They are encountering it during formation.

That changes the stakes.

The review treats adolescents, young adults, and early-career professionals as part of a broader pattern of AI engagement, citing data showing that nearly one-third of US children and adolescents aged 4-17 have used generative AI applications, with usage over 50% among 15-17 year olds. The paper also argues that youth may be particularly sensitive to the effects of AI overuse because self-regulation, independent reasoning habits, and emotional regulation are still developing.

That point should not be dramatized into panic. But it should be taken seriously.

When assistance arrives early, the risk is not merely that it replaces effort on isolated tasks. The deeper risk is that it rewires the expected relationship between difficulty and progress. Instead of learning that hard thinking is normal, valuable, and often necessary, students may increasingly experience difficulty as something to bypass immediately.

That has second-order effects.

It can weaken resilience under cognitive strain. It can reduce tolerance for ambiguity. It can subtly shift the meaning of learning from building understanding to getting to acceptable output. Once that shift becomes habitual, it is hard to reverse – a pattern that overlaps meaningfully with what I’ve described as continuous partial attention, where the mind becomes trained to process breadth at the cost of depth.

The behavioral framing is real but should be handled carefully

The review also discusses compulsive or addiction-like patterns in AI use, and references emerging instruments such as the Conversational AI Dependence Scale (CAIDS) and the Problematic ChatGPT Use Scale (PCGUS) as ways to identify maladaptive engagement. It also explores parallels between AI dependency and broader technology-related behavioral patterns.

This is important, but it should be handled with care.

There is a temptation to reach for dramatic language too quickly. “AI addiction” is an attention-grabbing phrase, but it can collapse several different phenomena into one bucket: convenience, habituation, emotional reliance, compulsive use, academic outsourcing, and simple overuse. The review itself is more careful than headline culture usually is. It repeatedly notes that the evidence is still developing and that more longitudinal and cross-context research is needed.

The more useful interpretation, at least for now, is that AI may be reinforcing certain problematic tendencies that already exist in digital life: low-friction dependence, fragmented attention, habitual delegation, and weakened reflective discipline. That is serious enough without forcing a premature psychiatric frame onto everything.

The real educational question is not access – it is design

If the concern were simply that young people are using AI, the only available responses would be prohibition or resignation. Neither is realistic.

The better question is how AI is integrated.

The review argues that responsible AI integration requires more than access or enthusiasm. It calls for structured pedagogy, human oversight, reflective practice, metacognitive strategies, clear role boundaries, and ethical guidance – positions echoed in UNESCO’s Guidance for Generative AI in Education and Research and the European Commission’s Ethical Guidelines on AI in Teaching and Learning. In other words, AI should be used inside learning designs that force students to stay cognitively present.

That is the right direction.

The goal should not be to keep AI away from young people. The goal should be to ensure that AI does not do the wrong work for them.

There is a major difference between using AI to expand inquiry and using AI to replace inquiry. There is a difference between using it to challenge a draft and using it to spare oneself the discomfort of thinking. There is a difference between using it as an assistant and slipping into dependence on it as a substitute mind.

Good educational design should protect those differences.

Three markers of healthy AI integration in youth

The following diagnostic is derived from the review’s recommendations combined with BBGK’s broader framing of the Performance- Capability Gap. It is meant as a practical lens for parents, teachers, school leaders, and policymakers – not a scoring system, but a set of questions worth asking.

| Marker | Healthy integration looks like | Borrowed performance looks like |

|---|---|---|

| Visible reasoning | The student can explain why an AI output is strong, weak, incomplete, or wrong – in their own words, without the tool present. | The student can produce the output but cannot defend, challenge, or rebuild it when questioned. |

| Cognitive friction preserved | Hard thinking is still part of the workflow. AI is used after the student has engaged with the problem, not before. | AI is the first move. Difficulty is treated as a signal to prompt the tool rather than to sit with the problem. |

| Calibrated trust | The student treats AI outputs as draft material requiring verification, comparison, and override. | The student treats AI outputs as answers, with confidence scaling to how fluent the output sounds rather than how well it has been checked. |

The strongest uses of AI in youth learning are the ones that keep all three markers alive. They require visible reasoning, comparison, critique, and revision rather than passive acceptance of generated answers. They ask students to explain why an AI output is weak, not just whether it is usable. They preserve friction where friction is educationally productive.

From AI literacy to epistemic discipline

One of the most important shifts education now needs is from AI literacy to epistemic discipline.

AI literacy usually means understanding how tools work, how to prompt them, how to evaluate outputs, and how to use them effectively. That matters. But on its own, it is too operational.

Epistemic discipline goes further.

It asks whether the student knows when not to trust fluency. Whether they can distinguish clarity from truth, coherence from depth, confidence from accuracy, and speed from understanding. Whether they know how to hold a generated answer at arm’s length long enough to test it.

The review comes close to this idea through its emphasis on critical evaluation, epistemic vigilance, reflective use practices, and the need to balance trust with autonomy. That is the kind of cognitive posture schools and families should be trying to cultivate.

Because the future will not belong to the people who can simply use AI. That baseline will spread fast. The long-term advantage will belong to the people who can remain mentally active in the presence of AI – people who can collaborate with it without surrendering to it.

The broader signal behind the youth AI debate

This is not only a youth issue. It is a preview issue.

What is happening with young people is an early signal of a wider pattern. Across domains, AI is making polished output cheaper and cognitive effort easier to avoid. The same tension now visible in students will increasingly shape professionals, managers, researchers, and creators: convenience versus capability, assistance versus autonomy, fluency versus judgment. The same core logic shows up in how organizations decide where AI should decide and where humans must intervene.

That is why youth matter so much here.

The habits formed early often become the defaults carried forward. If a generation grows up with AI as a constant cognitive partner, the question is not whether that will change how they think. It will. The more important question is whether the surrounding institutions are shaping that change wisely.

Right now, many are not. They are racing to integrate AI into workflows, classrooms, and expectations without a sufficiently serious theory of what human abilities must be protected in the process.

That is the strategic failure to avoid.

A better standard for the AI era

The right standard is not anti-AI. It is not nostalgia for a pre-AI classroom. It is not a moral panic about tools. And it is not a fantasy that young people can or should grow up untouched by intelligent systems.

The right standard is simpler and harder:

Use AI in ways that expand thought without replacing the work that builds it.

That means judging AI use not only by efficiency or output quality, but by what it does to memory, reasoning, self-regulation, and independent judgment over time. It means treating visible performance as an incomplete measure of educational success. And it means recognizing that the deepest cost of overreliance may not be immediate failure but a quieter erosion of the habits that strong minds depend on a cognitive version of the gradual wear I’ve described elsewhere in how modern systems reshape the people inside them.

AI may help young people perform. In many cases, it already does.

The more important question is whether we are teaching them how to think in a world where performance can be borrowed.

That is where the real future is being decided.

Frequently asked questions

What is the Performance Capability Gap?

The Performance – Capability Gap is the widening distance between what a young person can produce with AI assistance and what they can reliably do without it. It describes a situation in which visible output quality rises while the underlying cognitive capability that would normally sustain that output does not rise with it – and in some cases declines.

Is AI harmful to young people’s cognitive development?

The evidence is still emerging. The 2026 review by Giansanti and Cosenza is clear that AI offers real benefits – personalized learning, efficiency, engagement, creativity – but that excessive or unmoderated reliance may contribute to cognitive offloading, inflated self-efficacy, reduced independent problem-solving, and weaker critical oversight. The honest answer is that how AI is used matters more than whether it is used.

What is the dependency paradox?

The dependency paradox describes a counter-intuitive finding in recent AI research: higher AI literacy and trust can increase reliance on AI rather than reduce it. More familiarity with the tool often leads to more cognitive delegation, especially when trust rises faster than critical oversight. This is why teaching students to use AI is necessary but insufficient – it does not automatically protect them from dependency.

What is cognitive offloading?

Cognitive offloading is the habit of using an external tool to do thinking work that would otherwise have to be done internally. Calculators, search engines, and now generative AI all involve some form of offloading. With AI, the concern is that offloading has become faster, broader, and more comprehensive – covering not only information retrieval but reasoning, judgment, and creative work particularly during developmental years when those capacities are still being formed.

How should schools and parents respond?

The evidence points toward design over prohibition. Useful interventions include structured pedagogy that keeps AI inside learning tasks rather than replacing them, required visible reasoning and verification by the student, clear role boundaries for when AI is and is not appropriate, and a shift from teaching AI literacy alone to cultivating epistemic discipline the capacity to evaluate, challenge, and override AI outputs rather than passively accept them.

Source note

This article is informed primarily by the 2026 narrative review “Artificial Intelligence and Youth: Cognitive, Educational, and Behavioral Impacts” by Daniele Giansanti and Claudia Cosenza, published in AI (MDPI), which synthesizes emerging literature on AI’s benefits, risks, dependency patterns, and responsible integration strategies for youth and early-career users. The paper is useful as a conceptual map; its authors are transparent about the limitations of the evidence base and the non-systematic nature of the review. Additional contextual data is drawn from JAMA Network Open and Common Sense Media. Policy framing draws on UNESCO’s Guidance for Generative AI in Education and Research and the European Commission’s Ethical Guidelines. The PerformanceCapability Gap framework is a BBGK interpretation synthesizing these sources; it is not claimed by the cited authors.

Further reading from BBGK

- Knowledge Distance: the real limit of generative AI Why AI extends adjacent capability faster than it builds domain-native judgment.

- When AI gives bad advice Why the risk is not robotic output but persuasive output that is not yet reliable.

- The AI Decision Boundary Framework Where AI should decide, and where humans must intervene.

- How AI is reshaping memory, attention, and judgment What changes in the human mind when AI mediates cognition.

- Continuous partial attention The state of almost-present attention that modern systems train into us.